Table of Contents

Cisco

Here's a bunch of Cisco related notes to help with CCNA studies. Wish me luck!

For some Packet Tracer labs, go here.

20% 1.0 Network Fundamentals

20% 2.0 Network Access VLAN, Etherchannel, Spanning tree, Wireless/WLC

25% 3.0 IP Connectivity static routes, dynamic routes, OSPF, EIGRP, RIP

10% 4.0 IP Services

15% 5.0 Security Fundamentals

10% 6.0 Automation and Programmability

VLAN - Virtual Local Area Network

At a basic level, a Virtual Local Area Network is a way to arrange a set of devices on a network into different logical and virtual networks.

For example if you had an office with different PCs from different departments, if you connected them altogether into one switch or hub, all the data going to and from all the PCs would be visible to each other, potentially causing congestion and inefficient use of the network.

Arranging the PCs into logical groups so they would form their own little “virtual LANs” helps ease congestion. It also helps with security.

Cisco expands on this idea in much more technical detail…

Basic Switch Operations - review

In normal Layer 2 switch operations, a switch will take an ethernet frame it receives, check the destination MAC address and sends it to its destination port. It will learn MAC addresses of hosts connected to it, note the interface port number it is connected to and keep a record of it in its MAC address table. The switch effectively knows that a particular MAC address was reachable through a particular port.

If it receives a unicast frame (frame from one single node to another single node), the switch checks for the destination MAC address, then sends it to the destination interface.

If the switch receives a broadcast (destination MAC address FFFF:FFFF:FFFF), the switch will flood the frame to all ports except for the port it received the frame from. ARP will use this process of flooding on FFFF:FFFF:FFFF to discover MAC addresses.

Why we need VLANs

A problem arises when during flooding, the frame literally goes everywhere. It will be transmitted to all hosts inside the broadcast domain. In smaller networks this may not be too big of an issue. However larger networks will be affected as many hosts will be spending time having to ignore and discard broadcasts not intended for them.

Virtual Local Area Networks (VLAN) offer a way of dividing the single broadcast domain into smaller ones. Each broadcast domain can then be assigned a VLAN tag. Any broadcast traffic would then only be flooded out to switchports within its own VLAN.

Cisco Networking Academy Switched Networks Companion Guide: VLANs https://www.ciscopress.com/articles/article.asp?p=2208697&seqNum=4

How VLANs work

On a switch, everything basically starts out as one big VLAN. In fact by default, on Cisco switches, all interfaces are assigned to VLAN 1. (If you never configured any additional VLANs, the switch would just carry on working as you would expect).

If you wanted additional VLANs, you create the VLANs in the switch's interface, then assign the desired interfaces on the switch to your VLAN.

Each VLAN is assigned a number. You can give it a descriptive name if you want too, e.g. Sales, Support, Accounts, Management, Admin etc.

Once a switch is set up with the VLANs, traffic would only go to the interfaces on that same VLAN. It splits a big broadcast domain into smaller broadcast domains. This is how it helps with network efficiency and security.

What is VLAN tagging and What are trunk ports (tagged ports)?

Frames received on a switch often needs to be sent to other switches and routers.

If a frame originated from one particular VLAN, if the frame was just forwarded to another switch literally as it was, the other switch would not know it was originally part of a VLAN, so you would lose the benefit of implementing a VLAN.

To solve this, VLAN tagging is a way to carry the VLAN information within the frames so the next switch along knows it was part of a VLAN and proceed accordingly. A VLAN header is added to frame (thus “tagging” the frame) and transmitted.

A receiving switch should only forward any tagged frames to interfaces belonging to the same VLAN.

Any VLAN tags are removed upon final transmission to the destination host. End hosts would be unaware that any tagging or VLAN exists.

A trunk is a link between two switches used just to carry frames with the VLAN tags. Switches are assigned trunk ports to their interfaces and linked together with a crossover cable.

Different tagging schemes exist but the most common scheme is 802.1q or simply “Dot1Q”.

Some switches and routers may support other types of tagging, so you may need to explicitly specify Dot1Q.

Some switches will require you to set up a trunk port with these commands:

! in interface config switchport trunk encapsulation dot1q switchport mode trunk

You may require explicit dot1q declaration for setting up Router On A Stick too.

Some devices might throw up an error message if you declare dot1q, but it should not cause any harm to the config.

http://www.firewall.cx/networking-topics/vlan-networks/219-vlan-tagging.html

Inter-VLAN routing

Traffic between different VLANs and different subnets will be separated from each other, because that's the whole point of a VLAN! You can't get a device on one VLAN to talk to a device on another VLAN, not without some help. You would require a way to apply inter-VLAN routing. When you need to route traffic between different VLANs there are 3 common solutions:

- Router with separate interfaces

- “Router On A Stick”

- Layer 3 switch (Multilayer Switch)

Router With Separate Interfaces

This is the most obvious and basic solution. Different VLANs will have their own subnets and routers join subnets together.

This method uses an interface for each subnet.

However this solution does not scale very well when you have many VLANs.

You are limited to the number of physical interfaces on the router. Some routers can be upgraded with additional interfaces but there will still be a limit to this also.

Router On A Stick

This uses subinterfaces on a router to allow for more than one subnet to be assigned to a router's interface.

This scales a little better than having a router interface per VLAN. However this still has the problem of the packets having to physically traverse the ethernet cable.

n.b. this is probably the most likely thing you will be tested on in the CCNA exam!

Layer 3 Switch / Multilayer Switch

Your regular Layer 2 switch WON'T WORK for this. This uses Switched Virtual Interfaces (SVIs). The clients set the default gateway for traffic outside of their subnet to these SVIs. The switch will then route inter-VLAN traffic through its backplane.

This is the most likely real world solution for inter-VLAN routing you'll use.

https://www.cisco.com/c/en/us/support/docs/lan-switching/inter-vlan-routing/41860-howto-L3-intervlanrouting.html

https://ipcisco.com/lesson/switch-virtual-interfaces-ccnp/

https://networklessons.com/switching/intervlan-routing

Cisco recommended practices for VLANs https://www.cisco.com/c/en/us/support/docs/smb/routers/cisco-rv-series-small-business-routers/1778-tz-VLAN-Best-Practices-and-Security-Tips-for-Cisco-Business-Routers.html

https://www.reddit.com/r/networking/comments/yxbrfn/is_there_a_scenario_in_which_8021q_tags_are/?utm_medium=android_app&utm_source=share

VLANs and IP phones

Further reading: OCG

VTP

VLAN Trunking Protocol VTP is a system allowing you to manage VLANs from one place. It is helpful if you have a complex VLAN setup. Updates and changes can be done from one place instead of having to update every switch individually. A VTP server is set up on a switch, then other switches are set up as VTP clients.

DHCP - Dynamic Host Configuration Protocol

Cisco IOS DHCP, external DHCP server, clients, “helper-address”, Option 43 for wireless LAN controllers,

Maybe a section on step by step on how a client sends out DHCP request and how it is fulfilled by a DHCP server. DORA.

https://www.cisco.com/c/en/us/td/docs/ios-xml/ios/ipaddr_dhcp/configuration/xe-3se/3850/dhcp-xe-3se-3850-book/config-dhcp-server.html

Hot Standby Router Protocol (HSRP)

Hot Standby Router Protocol (HSRP) is one of the First Hop Redundancy protocols (FHRP).

They assign a group of redundant routers a “virtual IP address”, with clients using this IP address as their default gateway. Should one of the routers in the group go offline, one of the other routers adopts the virtual IP address and routing can continue proceeding as normal.

https://www.cisco.com/c/en/us/products/ios-nx-os-software/first-hop-redundancy-protocol-fhrp/index.html

https://www.cisco.com/c/en/us/td/docs/ios-xml/ios/ipapp_fhrp/configuration/xe-16/fhp-xe-16-book/fhp-hsrp.html

Spanning Tree Protocol (STP)

https://www.cisco.com/c/en/us/support/docs/lan-switching/spanning-tree-protocol/5234-5.html (archive http://archive.is/Ta2cd)

https://www.cisco.com/c/en/us/support/docs/smb/switches/cisco-small-business-300-series-managed-switches/smb5760-configure-stp-settings-on-a-switch-through-the-cli.html

http://www.firewall.cx/networking-topics/protocols/spanning-tree-protocol/1054-spanning-tree-protocol-root-bridge-election.html

https://www.ciscopress.com/articles/article.asp?p=2832407&seqNum=4

https://web.archive.org/web/20170708225914/https://www.cisco.com/en/US/products/hw/switches/ps679/products_configuration_guide_chapter09186a008007d775.html

Spanning Tree solves an awkward problem with Layer 2 switching. To understand what it does, you need to understand a little about Layer 2 switch operations and broadcasting and flooding.

Switches allow hosts on the same subnet (or VLAN) to communicate with each other. Frames (the Layer 2 protocol data unit) are sent via switches.

Should a switch fail, it could bring down the network.

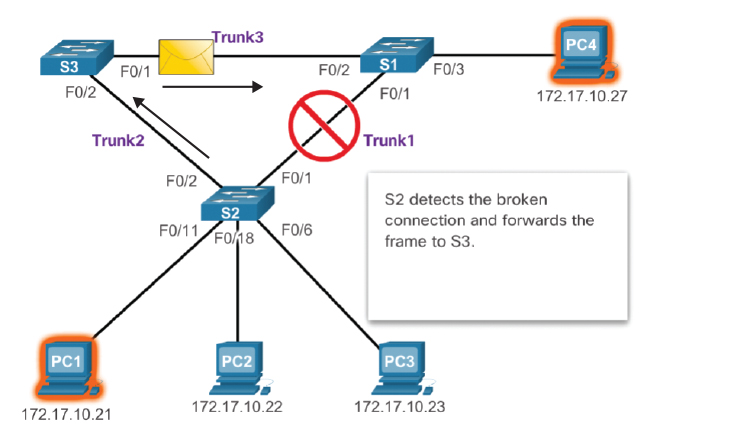

When installing a network, one may wish to install multiple switches for redundancy. Should one switch fail, traffic can be re-routed via a different path and no downtime occurs.

(example from Cisco Press - Spanning Tree Concepts)

However problems occur due to the way switches work.

Normally a switch will keep a table of MAC addresses of the devices connected to its ports. If the switch receives a frame with the destination MAC address that it has learned and has stored in its table, it will transmit the frame directly to that port.

If the switch has not learned that MAC address, by default will broadcast frames of an unknown MAC address by flooding every port (except for the port it received the frame on). It could cause a switching loop as each switch continues to forward the frames to each other causing a potential broadcast storm.

The switches spend more and more time updating their MAC address tables until the switch's CPU overloads and crashes. Obviously this is catastrophic for the network and is never wanted.

Spanning Tree solves this problem. It does this by blocking ports that could potentially form a loop.

The Spanning Tree Protocols automate the task of blocking ports and closing loops.

n.b. Spanning Tree Protocol still uses terminology referring to Bridges. Bridges are basically a legacy device that were a 2-port switch. They were used to separate collision domains when most networks had to use hubs. In practice Bridges have become obsolete and switches are mainly used as they have become cost effective to replace the old hubs with, however the terminology remains for STP.

By default Spanning Tree is enabled on all switches.

When Spanning Tree is enabled, it will assign ports on a switch to one of the following states:

- Root Ports - ports that have best path (least cost) to the Root Bridge

- Designated Ports - ports opposite root ports on neighbour switches. All ports on a Root Bridge are always Designated Ports

- Blocking Ports (or Alternate Ports) - ports which form a loop which are blocked

Root ports + designated ports are the most direct path to and from the root bridge and transition to a forwarding state.

One Root Bridge (Root Switch) is selected based on an election.

By default the election works on the Bridge ID plus the bridge's MAC address.

Less is preferred.

In the case of identical Bridge IDs, MAC address is used as the tie breaker. However this should be avoided as older switches will likely have a lower numerical MAC address and likely have slower interfaces than a more modern switch. Best practice is to manipulate the Spanning Tree election by setting the Bridge IDs manually.

Bridge Protocol Data Units (BPDUs) are transmitted while the STP determines the states of the ports and assigns them. The BPDUs contain the Bridge IDs and the costs.

The disadvantage of Spanning Tree is that it does reduce the available bandwidth of the network as ports have to be closed. However this is necessary because otherwise the network could form loops and cause broadcast storms and crash the network. You can get around this by doubling-up the upstream links to switches and setting up Etherchannel. Another potential solution is to use Layer 3 switches and enable Layer 3 routing and manage routes with static routes or dynamically with an IGP e.g. OSPF.

STP - the process

STP - Futher reading

https://learningnetwork.cisco.com/s/question/0D53i00000Kt09E/stp-2-nonrb-parallel-connection

“Base MAC address” used in Spanning Tree elections https://learningnetwork.cisco.com/s/question/0D53i00000Kt67u/stp-what-is-the-source-of-mac-address-in-bridge-id-details

Switch Security (Access Layer Switches)

Port security, DHCP snooping, Dynamic ARP inspection, 802.1x Identity Based Networking

Switchport Security (Port Security)

Port Security is a way to protect a switch from having unauthorised devices connected to it.

It works by checking the source MAC address of frames sent to it.

If you have ever at work disconnected your PC or office VOIP phone and connected a different PC to the ethernet cable on your desk, and found that you cannot logon to the network, this will be Port Security being triggered!

A switch will monitor incoming frames, and determine if a violation occurs. Should a violation occur, different actions can be taken depending on the configuration.

One should take into account that nowadays MAC addresses can be easily “spoofed”. For instance you can go into your PC's Windows OS settings and just change the MAC address of your ethernet adapter. However Port Security on a Cisco switch doesn't have to check for specific MAC addresses. It can dynamically learn a MAC address, then ensure no other MAC address can use a particular port, otherwise a violation will be triggered.

Port Security Options

Port Security is enabled per port.

If you're using EtherChannel, Port Security should be enabled on the port-channel interface rather than the physical interfaces.

- Static - declare a specific MAC address authorised on an interface

- Dynamic - learn a MAC address dynamically authorised on an interface

- Sticky - learn a MAC address dynamically and add the specific MAC address to running-config, then save to startup-config (Useful for adding many MAC addresses to a config that would otherwise take too long to type each one manually into running-config)

- Maximum - declare a maximum number of [different] MAC addresses authorised on an interface.

Port Security Violations

If the switch detects a Port Security violation, it will take an action to protect the port and the rest of the switch and network.

- Shutdown (default) - interface placed into Error Disabled state. All traffic blocked.

- Protect - interface continues to work and forward traffic but only for authorised MAC addresses. Traffic from unauthorised devices are dropped

- Restrict - similar to Protect, interfaces continues to work and forward traffic but only for authorised MAC addresses. Traffic from unauthorised devices are dropped. Violation is logged. Violation counter is incremented.

Port Security Recovery

If a Port Security violation occurs, action should be taken to correct the issue.

For Shutdown, a port will be put into Error Disabled (Errdisable). To bring it back up, one must do a “shut, no shut” on the interface to reset it. However if the problem that caused the shutdown in the first place is not taken care of, the port will go into Errdisable again! If the violation was due to another device (another MAC address) triggering port security, this other device must be removed.

Auto Recovery is also possible. An interface can be brought back after a timer has counted.

DHCP Snooping

In normal DHCP operations, a DHCP server will issue TCPIP config to hosts automatically. This config includes IP addresses, subnet masks, default gateways etc.

Problems occur when 2 or more DHCP servers installed on the same network start trying to answer the DHCP requests. Your real DHCP server may effectively be blocked from issuing proper TCPIP config to hosts by a rogue DHCP server.

A rogue DHCP server may be a malicious attacker, but more likely just going to be a user who brought their home router into work and connected into the network, and the router's DHCP server just messin up everything.

The DHCP Snooping feature on a switch will monitor DHCP traffic and drop anything that may be rogue.

A trusted port of where the real DHCP server is connected and declared in the config so the switch knows not to drop that particular DHCP traffic.

DHCP Snooping Basic Setup

ip dhcp snooping ! enable DHCP snooping globally on switch ip dhcp snooping vlan 1 ! declare single VLAN to protect ip dhcp snooping vlan 10,199 ! declare list of VLANs you want to protect int f0/0 ! enter interface config ip dhcp snooping trust ! enable the interface as a trusted DHCP interface

https://www.pearsonitcertification.com/articles/article.aspx?p=2474170

- Cisco Press: Switch Security https://www.ciscopress.com/articles/article.asp?p=2181836&seqNum=7

ACL - Access Control List

Access Control Lists identify characteristics of a packet, then can make a decision to deny or permit that packet.

ACLs can be configured to identify the following:

- Source IP address

- Destination IP address

- TCP/UDP Port number

Originally designed as a security measure.

ACLs come in a number of different configurations:

| Standard Numbered ACL | Extended Numbered ACL |

|---|---|

| Standard Range 1-99, 1300-1999 | Extended Range 100-199, 2000-2699 |

| Standard Named ACL | Extended Named ACL |

| Standard | Extended |

Standard ACLs work by checking the Source Address (or Source Subnet) only.

Extended ACLs work by checking Source IP address/subnet, Destination IP address/subnet, protocol (TCP/UDP etc), port number

As general guidelines:

- Standard ACLs are best placed close to the destination as possible

- Extended ACLs are best placed close to the source as possible (saves data having to traverse the whole network only to be denied, saves bandwidth)

Important things to remember:

- When an ACL is applied to an interface, by default it will block everything.

- There needs to be at least one permit command placed in the ACL, otherwise it will block everything.

- There is an implicit “deny any any” at the end of every ACL

Order of ACL entries

- Routers will read the ACL from top to bottom, much like a computer program

- As soon as a rule matches the packet, the permit/deny is applied and the ACL is not processed further.

- You put the more specific granular rules at the top, the more general rules at the bottom

One more thing…

- Once your ACL is declared, it needs assigning to an interface as an IN or OUT rule

- use the access-group command to assign an ACL to an interface

Verify access lists

! running-config will show what ACLs are assigned to which interfaces if any

show running-config

! if you are only interested in one interface

show ip interface f1/0 | include access list

example output

outgoing access list is 100

inbound access list is 101

! "not set" would show if ACL is not applied

Numbered Standard example:

! deny host 10.10.10.10 to access the host connected to the router's interface, permit anything else ! n.b. use wildcard mask to declare the relevant hosts or subnet access-list 1 deny 10.10.10.10 0.0.0.0 access-list 1 permit 10.10.10.0 0.0.255 ! assign to interface as an OUT interface F0/1 ip access-group 1 out

Numbered Extended example

! Permit host 10.0.1.10 to access telnet (TCP port 23) on host 10.0.0.2, deny telnet from everything else ! final "Permit ip any any" is required to permit all other traffic also so other apps not affected ! "access-list 100" declares ACL 100, which is in the 100 range so is interpreted as an Extended ACL access-list 100 permit tcp host 10.0.1.10 host 10.0.0.2 eq telnet access-list 100 deny tcp 10.0.1.0 0.0.0.255 10.0.0.2 eq telnet access-list 100 permit ip any any ! assign ACL to interface int f1/0 ip access-group 100 in ! Remove the ACL, use the command **no** ! n.b. the ACL will still be in the running-config but shouldn't be doing anything if not assigned to an interface int f1/0 no ip access-group 100 in

Named ACL example:

! allow telnet from 1 host but not others, allow ping from one host but not others ! ip access-list extended cheesef1/0_in permit tcp host 10.0.1.10 host 10.0.0.2 eq telnet deny tcp 10.0.1.0 0.0.0.255 host 10.0.0.2 eq telnet deny tcp any host 10.0.0.2 eq telnet permit icmp host 10.0.1.11 host 10.0.0.2 echo deny icmp 10.0.1.0 0.0.0.255 host 10.0.0.2 echo permit ip any any exit int f1/0 ip access-group cheesef1/0_in in ! !

To edit an ACL

ip access-list 100 extended #15 deny tcp etc

Network Address Translation (NAT)

| Static | Dynamic | Port Address Translation |

|---|---|---|

| 1-to-1 mapping of an external public IP address to a private internal IP address | Mapping of a pool of external public IP addresses to private internal IPs and the mapping is purged as and when is required | Uses Dynamic NAT along with TCP port numbers mapped to specific apps on a host device (“overload”) |

| Most useful for things like a web server or email server that requires a constant presence on the Internet. Pure Static NAT will require total use of a single public IP address per host. | To work fully, Dynamic NAT would require a public IP address for every host on the network. If there are more hosts than public IP addresses, some hosts will not be able to access the public internet until a public IP address is freed up. | Can make use of just a single public IP address and share with mulitple hosts. In theory the limit is up to how many TCP/UDP ports there are (65535, 16 bits), but practically Cisco says about 3000 is the limit due to RAM and CPU constraints? Very common in home broadband internet connections that share a single IP address via a home wifi router with multiple client devices. |

Network Address Translation (NAT) is the process of converting an IP address that originated on 1 network into another IP to be used on another network, then converting it back again.

Predominantly used to convert private IP addresses (RFC 1918) to a publicly routeable IP address so the hosts on the private network can access resources on the Internet.

Does also have limited use where there are 2 networks made up of private IP addresses (e.g. if all hosts were in the 192.168.x.x range) and they needed to be merged together temporarily, although this is rare. (2-way NAT)

Historically NAT was conceived as a way to extend the life of the existing IPV4 address space.

With NAT, it was possible for many different devices to share a small number of public IP addresses. No longer was it required for each device/host to have its own publicly routeable IPv4 address.

In Cisco IOS, Access Control Lists (ACLs) are used to set up NAT.

Static NAT

Static NAT translates one public IP address to a private IP address.

Sometimes know as a 1-to-1 NAT.

To set this up on a Cisco router

- specify your “inside” and “outside” interfaces. Usually the interface with the private IP (e.g. 192.168.X.X, 10.X.X.X etc) will be the inside and the interface with the public IP will be the outside.

- declare the addresses you want to NAT

int f0/0 ip nat outside int f0/1 ip nat inside ip nat inside source 10.0.1.0 203.0.113.3

Dynamic NAT

Dynamic NAT makes use of a pool of addresses which are given out as they are required.

For devices like workstations that do not host any servers/services this is ideal as a public IP address does not need to be reserved for a device that may not be needed all the time.

- Specify your inside and outside interfaces

- Specify your pool of public global addresses

- Create ACL which references the internal IP addresses we want to translate

- Associate ACL with NAT pool

Dynamic NAT with Overload (Port Address Translation)

Dynamic NAT makes use of different TCP ports.

The NAT router will keep track of what source ports (as well as source address) and translate them,

then when the reply packet is received, it translates back to the source port and source address.

Effectively it allows many different devices on an “inside network” with private IP addresses to share a single external public IP.

This is a little different to straight Dynamic NAT that still requires enough public IPs for the devices you have on your network. e.g. if you had 10 devices, you would need a pool of 10 public IP addresses for your devices.

It's very difficult to explain in words!

Take for example a PC with a browser installed.

The PC is installed on a local private network, so has been assigned a private IP address, 192.168.0.20.

The network's gateway (router) has been set up with NAT Overload using Port Address Translation.

The PC needs to browse an external site, let's say http://1.1.1.1

The PC and its browser will open a TCP connection and decide on a TCP port to use (in reality this is negotiated between the PC's operating system and the installed browser)

So the PC might open a connection using the source address and port 192.168.0.20:49165, to destination 1.1.1.1:80

The router receives this packet and does a NAT translation. It will translate the source IP address and source port to its assigned public address and some TCP port.

So what started as source 192.168.0.20:49165, to destination 1.1.1.1:80 turns into source 203.0.113.1:4096, to destination 1.1.1.1:80, for example.

The router keeps track of this translation in a table.

The public web server on 1.1.1.1 sees this packet, and sends a reply from source 1.1.1.1:80 to destination 203.0.113.1:4096

The router receives this reply packet. It sees the port number in the reply is 4096, and it knows 4096 was assigned to the device on internal private address 192.168.0.20.

So it does a translation of source 1.1.1.1:80 to destination 203.0.113.1:4096, to 1.1.1.1:80 to destination 192.168.0.20:49165.

The PC receives the reply and processes the packet.

There can be many port numbers, as many ports as TCP ports are allowed.

Modern browsers make use of different ports to allow you to have different browser tabs. Each tab is effectively its own TCP source port. This is why you can browse the same site on the same PC and browser, but still browse different pages as each session is separated by different source TCP ports.

NAT - Further reading

- Cisco's definitions of NAT (incl Local and Global) https://www.cisco.com/c/en/us/support/docs/ip/network-address-translation-nat/4606-8.html

- IPv6 vs IPv4 NAT in Xbox Live https://www.reddit.com/r/xboxone/comments/9qzpti/is_ipv6_actually_important_to_have_on_like_xbox/

- Check Point NAT https://www.youtube.com/watch?v=Szc-Yj2bHYk

- Check Point NAT, Hide NAT, Static NAT https://sc1.checkpoint.com/documents/R81/WebAdminGuides/EN/CP_R81_SecurityManagement_AdminGuide/Topics-SECMG/Configuring-NAT-Policy.htm

Cisco's Implementations of NAT

IPv6

EUI-64

SLAAC

WAN types

- Metro Ethernet (Metro-E)

- MPLS VPN

- DSL

- Cable

- Wireless WAN (cellular 3G,4G,LTE,5G)

- Fibre ethernet (Fibre to the premises)

VPN

Turns out what you thought you knew about VPNs you should probably forget and start from scratch. It's more complex than you thought. :(

A Virtual Private Network is a private network within a public network. That's it.

You may think that a VPN is a “secure network”, and although this is common, strictly speaking a VPN does not automatically mean it is secure.

You may have heard of VPN providers selling VPN as a service. What they're actually selling you is more than literally the VPN. They are selling a secured and anonymised internet service and using VPN technologies to supply this to you.

Your plain old ordinary VPN is literally just a private network over a public network.

VPNs are built using different devices, modes and technologies.

Traditionally to link private networks that are situated in different locations together, one would have to install private links between the sites. Leased line, MPLS, satellite, frame relay. etc

This can be expensive especially if there are many sites to be linked together.

However one can do this virtually using a public network like the Internet. You use a tunnelling protocol to encapsulate data from your private networks, send it across the public network and when it reaches the other end of the tunnel it is de-encapsulated. This is your virtual private network.

Normally it is common that you implement security to prevent others from seeing or changing the data as it is transmitted.

https://www.cisco.com/c/dam/en_us/training-events/le21/le34/downloads/689/academy/2008/sessions/BRK-134T_VPNs_Simplified.pdf

https://networklessons.com/cisco/ccna-routing-switching-icnd2-200-105/introduction-to-vpns

Types of VPN, Remote access, Personal, Mobile, site-to-site https://www.top10vpn.com/what-is-a-vpn/vpn-types/

IPSec

sample chapter from CCIE Routing and Switching v5.1 Foundations: Bridging the Gap Between CCNP and CCIE

https://www.ciscopress.com/articles/article.asp?p=2803868

Tunnel Mode and Transport Mode

https://www.ciscopress.com/articles/article.asp?p=25477&seqNum=2 (Same article! https://www.informit.com/articles/article.aspx?p=25477)

http://www.firewall.cx/networking-topics/protocols/127-ip-security-protocol.html

https://www.dummies.com/programming/networking/enterprise-mobile-device-security-comparing-ipsec-and-ssl-vpns/

https://networklessons.com/cisco/ccie-routing-switching/ipsec-internet-protocol-security

IPsec by Oracle

https://docs.oracle.com/cd/E53394_01/html/E54829/ipsecov-7.html

https://docs.oracle.com/cd/E53394_01/html/E54829/ipsecov-1.html

http://www.internet-computer-security.com/VPN-Guide/ESP.html

https://www.psychz.net/client/question/en/is-gre-secure.html#:~:text=Generic%20Routing%20Encapsulation%20(GRE)%20is,secure%2C%20does%20not%20provide%20encryption.

http://blog.boson.com/bid/92815/what-are-the-differences-between-an-ipsec-vpn-and-a-gre-tunnel

IPsec pentesting https://subscription.packtpub.com/book/networking_and_servers/9781787121829/1/ch01lvl1sec17/pentesting-vpn-s-ike-scan

Further reading

Block ciphers and stream ciphers (symmetrical encryption) https://www.thesslstore.com/blog/block-cipher-vs-stream-cipher/

Network Architecture

LAN architecture

Power over Ethernet

PoE policing modes : https://www.cisco.com/c/en/us/td/docs/switches/lan/catalyst4500/12-2/53SG/configuration/config/PoE.html#wp1087217

Cisco IOS operations and Device security

IOS command hierarchy,

Console, telnet, SSH, VTY lines,

enable password, service password-encryption, enable secret

privilege levels

login banner, exec banner

NTP

AAA

Switch Memory Types

Cisco Switches contain different types of memory to store configurations

RANDOM ACCESS MEMORY RAM (sometimes called DRAM)

Stores running-configuration and the IOS program loaded from Flash.

This is volatile memory that is lost when the device is powered off, exactly like RAM in a PC.

FLASH MEMORY

Stores the IOS software and vlan.dat (VLAN config)

Is sometimes flash chips on the device's motherboard or on removeable memory cards.

NVRAM Non volatile Random Access Memory

Stores startup-configuration. Contents not lost when the device is powered off, but can be re-written to if required.

READ ONLY MEMORY ROM

Stores the bootstrap to make the device look at the Flash memory to load into RAM (like a BIOS on a PC?)

Further reading OCG Vol 1, Chapter 4, Page 100, Storing Switch Configuration Files

SVI - Switched Virtual Interface

A Layer 2 switch will support a single Switched Virtual Interface for the purposes of management. This includes remotely connecting to it via SSH, telnet, or for getting info via SNMP.

Further reading OCG1 Chapter 6

A Layer 3 (multilayer) router will support multiple SVIs and inter-VLAN routing between the SVIs.

Network Device Management

Syslog, syslog severity levels 0-7, debug, SIEM, NMS, SNMP, SNMP v2c, SNMP v3, MIB

QoS - Quality of Service

work in progress!

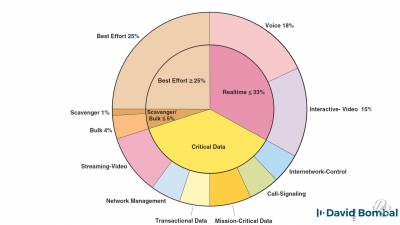

QoS, dedicated (separate) networks, converged networks, shared bandwidth, quality requirements

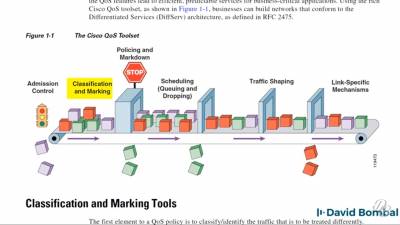

latency, jitter, loss, FIFO (First In First Out), congestion

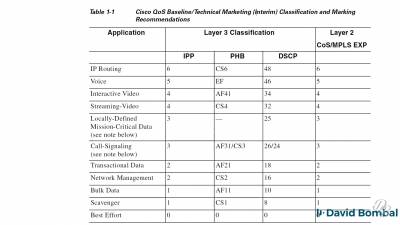

QoS classification, QoS marking, Cos, DSCP, ACL, NBAR, 802.1q

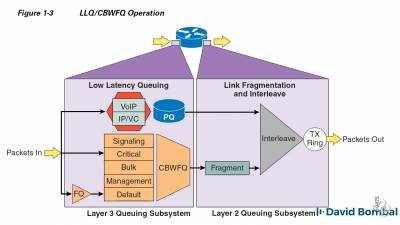

Queuing, CBWFQ (Class Based Weighted Fair Queuing), LLQ (Low Latency Queuing), MQC modular QoS CLI, MQC framework, class maps, policy maps, service policies.

Policing and shaping

Worm and junk traffic mitigation

https://www.cisco.com/c/en/us/td/docs/solutions/Enterprise/WAN_and_MAN/QoS_SRND/QoS-SRND-Book/QoSIntro.html?hcb=1

https://www.ciscopress.com/store/end-to-end-qos-network-design-quality-of-service-for-9781587143694

Cloud Computing

There's been a lot of buzz around Cloud Computing in recent years. Things like “Cloud is somebody else's computer” and other similar things. Actually cloud has some strict definitions before you can technically call it cloud.

The 5 things that define cloud according to NIST:

- On-demand self service

- Broad network access

- Resource pooling

- Rapid elasticity

- Measured service

Wireless (IEEE 802.11)

Wireless Basics

Technically all sorts of things can be called “wireless”, including infrared remote controls, AM/FM radio, cellular radio, Bluetooth, Qi charging, NFC, etc

Wireless in computer networking formally refers to IEEE 802.11 and Cisco and the CCNA generally uses these terms also.

Wireless networks are defined in IEEE 802.11 as Service Sets. Different service sets define the different types.

3 types of wireless service set types:

- Independent (IBSS, aka Adhoc)

- Infrastructure (BSS, ESS, WifiDirect)

- Mesh MBSS

Independent wireless network is where devices connect directly to each other (without a wireless access point).

IEEE 802.11 defines this as Independent Basic Service Set (IBSS).

Also known as Adhoc. Apple Airdrop works in this way. Is a type of peer-to-peer (P2P) networking.

Pictochat on Nintendo DS consoles appear to use similar p2p networking. It allowed up to 16 consoles to connect to each other and send messages within the adhoc network.

Infrastructure wireless network requires a wireless access point.

IEEE 802.11 defines this as Basic Service Set (BSS).

Typical home user internet services with a provider supplied wireless router work in this way.

All the parameters of the wireless network are controlled by the wireless AP, eg frequencies (channels), encryption etc

Mesh networks are made up of a group of wireless access points linked together using a wireless backbone. Typically they will be linked together using 5GHz, leaving 2.4GHz available for wireless stations (devices) to connect to and access the network. This type of link is useful for situations where it is not possible to link wireless APs together using ethernet cables and switches.

SSID - Service Set Identifer. This SSID defines a wireless network.

These are the names you see when you check for visible wireless networks you can connect to with your wireless device.

SSIDs do not have to be unique, although it is best practice to make them unique, eg to separate the corporate office network and free wifi that ordinary members of the public may use. The corporate wifi SSID could be called “MadeUpCo_Corporate”, the public guest wifi could be called “MadeUpCo_Guest”.

The BSSID Basic Service Set Identifier is an ID used to identify individual devices within a BSS. For example if you have a large BSS with multiple wireless APs all broadcasting the same SSID, each wireless AP within the BSS will require a unique BSSID. Functionally this is similar to MAC addresses on switches as wireless stations will use the BSSID in headers to identify the BSSID to transmit to. BSSIDs themselves are based on the MAC addresses of the wireless APs.

BSA - Basic Service Area - defines the physical area that a wireless AP covers. BSA covers a physical area, while a BSS covers a group of devices.

DS - Distribution System, defined as the upstream part of the network that the wireless access point connects to. So if you have a wireless AP, linked to a switch which in turn is linked to a router, the switch and the router is the DS.

Wireless Access Points

Wireless access points are the devices equipped with the wireless antenna to transmit and receive wireless signals and connect to the Distribution System via an ethernet interface.

They serve as a central connection point in a wireless network. They also serve as a bridge to the wired network.

Wireless APs work in either Autonomous or Lightweight mode.

Wireless LAN Controllers

If your access points are setup in lightweight mode, they will require a Wireless LAN Controller to work. It has the benefit of being able to control the whole wireless network from one location, instead of having to individually set up multiple APs in autonomous mode. The WLAN controller manages the various VLANs and WLANs and pass traffic between them.

Dynamic Interfaces

https://www.firewall.cx/cisco-technical-knowledgebase/cisco-wireless/1077-cisco-wireless-controllers-interfaces-ports-functionality.html

https://rscciew.wordpress.com/2014/01/22/configure-dynamic-interface-on-wlc/

https://twitter.com/petermoorey/status/775714833698127872?s=19

Wireless Network Architectures

Autonomous

Networks using Autonomous APs are the most simple. They are best suited to small sites where wireless coverage only needs to cover a small area and require only a few APs. The higher the number of APs required, the more difficult it is to set up and maintain.

Autonomous APs have to be set up individually so you would literally have to go into each AP and configure it. If you have lots of APs, you may instead want to consider using a Wireless LAN Controller (WLC) with lightweight APs instead as everything can be managed from the WLC centrally.

Split Mac with Lightweight APs and WLC

Cloud Based APs

Cloud based APs are said to be “inbetween” Autonomous AP and Split-MAC architecture.

Autonomous APs are managed centrally in the cloud. Local production data traffic gets routed locally within the LAN (like in normal autonomous APs) , but control/management traffic gets sent to the cloud controller.

Cisco's cloud AP is Miraki.

https://documentation.meraki.com/Architectures_and_Best_Practices/Cisco_Meraki_Best_Practice_Design/Meraki_Cloud_Architecture

Further Reading - Wireless

https://tamxuanla.blogspot.com/2018/06/resetting-cisco-air-cap1702i-e-k9.html

https://www.youtube.com/watch?v=qp2kb_R287c

https://www.firewall.cx/cisco-technical-knowledgebase/cisco-wireless/1077-cisco-wireless-controllers-interfaces-ports-functionality.html

https://www.ciscopress.com/articles/article.asp?p=344242

https://www.speaknetworks.com/cisco-wireless-controller-configuration/

Network Automation and Programmability

https://blogs.cisco.com/developer/ansible-for-dna-center

Differences between SOAP and REST

Python Netmiko to SSH into Cisco IOS devices

https://developer.cisco.com/video/net-prog-basics/

Cisco Live DEVNET-1725: How to Be a Network Engineer in a Programmable Age

https://www.ciscolive.com/global/on-demand-library.html?search=DEVNET-1725#/session/1488925129352001ZaCR

SDN - Software Defined Networking

https://www.researchgate.net/publication/325560149_Network_convergence_in_SDN_versus_OSPF_networks

VMware NSX

https://www.vmware.com/topics/glossary/content/software-defined-networking

Useful pages

Unicast, Broadcast & Multicast https://learningnetwork.cisco.com/thread/66629

Layer 2 and Layer 3 switching

https://documentation.meraki.com/MS/Layer_3_Switching/Layer_3_vs_Layer_2_Switching

TCP/IP Model (TCP/IP Layers) https://docs.oracle.com/cd/E19683-01/806-4075/ipov-10/index.html

Subnet prefix https://docs.oracle.com/cd/E19109-01/tsolaris8/816-1048/networkconcepts-2/index.html

https://www.9tut.com/

https://pg1x.com/tech:network:cisco:ospf:area-prefix-list:area-prefix-list

https://www.grandmetric.com/2018/03/08/how-does-switch-work-2/

Switches are layer 2 but ACLs can check the IP address and tcp/udp and ports??? https://community.cisco.com/t5/switching/layer-2-devies-and-acl-s/td-p/1284013

Types of WAN connection - https://www.ictshore.com/free-ccna-course/wan-connections/

CCNA subreddit https://www.reddit.com/r/ccna/

Cisco Adaptive Security Appliance (Cisco ASA) https://www.cisco.com/c/en_uk/products/security/adaptive-security-appliance-asa-software/index.html

Things to avoid / Best Practices

Things I've been told to be careful of or to avoid when doing networking.

Leaving the Native VLAN on VLAN 1

Apparently there are some security issues with leaving the native VLAN on VLAN 1, so it should be changed to another unused VLAN.

The VLAN VTP Server killing all production VLANs

You will have different VLANs set up on your network if you require network data to only go where you want it to go. Layer 2 switches by default are set up to work as one big broadcast domain. This means if you send data through one port on a switch, if the switch does not know the MAC address it is supposed to go to, it will 'broadcast' on all other interfaces. VLANs separate the interfaces into different VLANs so makes transmitting data a little more efficient and a little more secure.

If you have a complex VLAN setup with various VLANs, it can be quite a task to set this up on all your switches. For VLANs to work properly, VLAN config needs to be setup on all the switches in the network expected to trunk traffic for those particular VLANs. This instructs the switches to direct the relevant ethernet frames carrying the dot1q headers to the right places.

To manage VLANs more easily, the VLAN Trunking Protocol (VTP) allows you to manage these various VLANs in 1 place. You can set up a switch as a VTP server, then set up other switches as VTP clients.

The thing to be careful of is introducing an old switch you may have found lying around, plugging it into the network, and the switch happens to be running VTP Server with a higher revision number. VTP will then start using the VLANs set up on that old VTP Server taking priority over your real VTP Server. This could potentially delete all your production VLANs you spent ages carefully setting up and dropping live hosts from the network. THIS IS REALLY BAD.

Probably safer to reset the switch to factory settings.

If you really need the old config on that switch, isolate the switch and copy the runnning-config if you really need it, then reset the switch to factory settings.

Rogue DHCP servers

DHCP would normally handle issuing IP addresses (and other information like DNS server etc) by a DHCP server answering a request from a DHCP client.

For this to work properly the DHCP server must be the sole server (or maybe from one other backup you've installed) to supply this information from one central place.

If another device hosting a DHCP server is connected to the network, this may intercept the DHCP requests and cause problems with the rest of the network.

Typically this “rogue” DHCP server is from a user bringing their own home broadband router to expand available ports. This router will have a built-in DHCP server into the office and connecting it into the ethernet switch the user is unaware it would cause problems.

To prevent this, port security must be enabled on the switches to reduce the likelihood of problems. DHCP Snooping needs enabling to monitor the DHCP activity to ensure it does not compromise the network.

Don't have single points of failure

Video by Network Chuck https://youtu.be/wwwAXlE4OtU

Use Enable Secret instead of Enable password and service password-encryption

Try putting 070C285F4D06 (link) into google. You'll probably find it is the result of a hash of “cisco” from a Cisco IOS router. The hash algorithm that processes that string has been broken and shouldn't be used to protect data.

Cisco routers and switches that come from factory have no passwords or restrictions in their settings.

Cisco IOS has different levels of access within the interface:

- User Exec mode

- Privileged Exec mode (aka “Enable Mode” because you have to type “enable” or “en” to get to it)

- Global Configuration

- Interface/Router/Line Configuration

User exec and Privileged exec can be protected by a password that must be entered by the user to gain access to that mode. The password itself is stored in the running-config (and startup-config).

For instance you can use the “enable password” command to apply a password.

R1(config)#enable password mypasswordpleasedonthack

However with this method the password is stored in running-config in PLAIN TEXT, unencrypted. The show running-config or show run command will display the config including the password:

Router(config)#do show running-config

Building configuration...

Current configuration : 715 bytes

!

version 15.1

no service timestamps log datetime msec

no service timestamps debug datetime msec

no service password-encryption

!

hostname Router

!

!

!

enable password cisco

!

!

!

!

!

!

ip cef

no ipv6 cef

IOS does feature a “service password-encryption” that will hash the passwords stored in running-config so they are no longer human readable. After running service password-encryption:

Router(config)#do show run Building configuration... Current configuration : 721 bytes ! version 15.1 no service timestamps log datetime msec no service timestamps debug datetime msec service password-encryption ! hostname Router ! ! ! enable password 7 0822455D0A16 ! ! ! ! ! ! ip cef no ipv6 cef

The “7” showing in the line “enable password” denotes Type 7 and means the password has been encoded using the service password-encryption command.

It is best practice to use the enable secret command to encrypt passwords in running-config so they are not easily cracked. enable secret will hash the passwords using MD5 and show up as type 5.

Further reading

- Cisco Type 7 Reverser https://packetlife.net/toolbox/type7/

- Wendell Odom Official Cert Guide Vol 2 Chapter 5 Page 89

SNMP

Simple Network Management Protocol is a way for devices to send data to each other about network management. It can be used to request statistics or to notify state changes, eg HSRP state change.

SNMP v3 supports encryption and strong authentication (usernames and passwords) so use this if you can.

SNMP v1 and v2c uses Community Strings to protect the data these devices send. These community strings act a lot like passwords and are PLAIN TEXT so unsecured.

Often by default a Read Only (RO) community string will be set to “public” and a Read Write (RW) community string will be set to “private”. These should be changed from their defaults.

If you are not actually using SNMP, best practice is to disable it altogether.

Networking Hardware

Differences between Layer 3 Switches and Routers?

Traditionally network switches (Layer 2 switches) link your subnets together and a network router routes traffic between different subnets and link to WANs. L2 switches work by keeping records of known MAC addresses in tables. When a switch receives a frame, it will check the frame headers to see if the MAC address is known and forward it to its destination interface. It will also flood a frame via a broadcast if it cannot see a known MAC address. It has no awareness of IP addresses.

Routers that receive a packet will check the destination IP address in the packet's headers, then make a decision of where to route it to. Decisions the router may take could involve a static route, route decided by an interior gateway protocol, Access Control List etc.

In short, switches check MAC addresses in frames, routers check IP addresses in packets.

A newer type of device called Layer 3 Switches (or Multilayer Switches) has become more common.

A Layer 3 switch will perform the functions of a regular Layer 2 switch with the addition of some Layer 3 routing functions that you will find in a router.

It would not be accurate to say a Layer 3 switch is just another type of router as there are some notable differences. It may be true to say a L3 switch is a L2 switch with some enhancements.

You could feasibly take a L3 switch and simply use it exactly like a L2 switch and never use its additional L3 features.

Routers will sometimes have expandable slots to upgrade its capabilities. Additional WAN interface cards (WICs) can be installed so a router can support serial, DSL, ISDN, fibre etc.

Switches have a fixed number of ethernet ports and will unlikely have any expandability.

Routers primarily have make their decisions based on software calculations. Switches have specialised ASICs (application-specific integrated circuits) which make them very quick at making decisions.

Layer 3 switches are more likely to be found in campus distribution layer. Layer 2 switches are more likely to be found in a campus access layer.

https://community.fs.com/blog/layer-3-switch-vs-router-what-is-your-best-bet.html

Ethernet Cabling

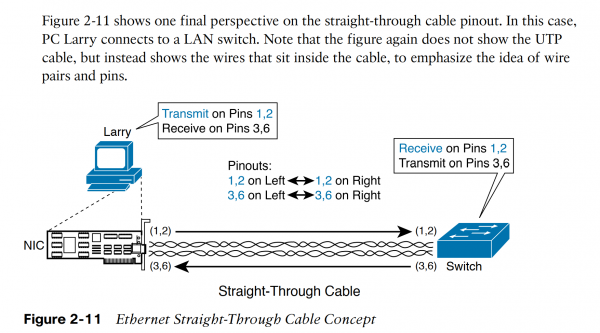

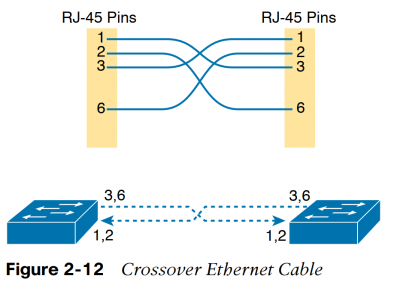

Devices equipped with ethernet sockets will have either of 2 different configurations which have differing pinouts.

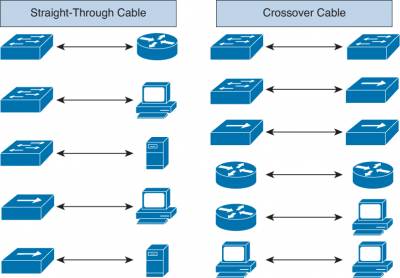

There are 2 types of cable used to link these devices together. A Straight-through cable and a Crossover cable.

Basic straight through cable concept:

Straight through cables for Ethernet and Fast Ethernet, pins 1 2 3 6 are “straight through”.

Crossover cables the receive and transmit pins are “flipped”, so Pin 1 lines up with Pin 3 and Pin 2 lines up with Pin 6.

| Straight-through | Crossover |

|---|---|

| Host to switch or hub Router to switch or hub | Switch to switch Hub to hub Hub to switch Host to host Router direct to host Router to router |

https://www.ciscopress.com/articles/article.asp?p=2092245&seqNum=3

| IEEE | Standard | Common Name | Medium | Speed | Max Length |

|---|---|---|---|---|---|

| 802.3 | 10BASE-T | Ethernet | 2 pairs copper | 10 Mbps | 100m |

| 802.3u | 100BASE-T | Fast Ethernet or FE | 2 pairs copper | 100 Mbps | 100m |

| 802.3ab | 1000BASE-T | Gigabit Ethernet or GE | 4 pairs copper | 1000 Mbps (1 Gbps) | 100m |

| IEEE | Standard | Common Name | Medium | Speed | Max Length |

| 802.3z | 1000BASE-LX | Gigabit Ethernet | ?? | 1000 Mbps (1 Gbps) | 5000 m |

| 802.3? | 10GBASE-S | 10 Gigabit? | Multimode fibre with LED | 10,000 Mbps (10 Gbps) | 400m |

| 10GBASE-LX4 | 10 Gigabit? | Multi-mode fibre with LED | 10 Gbps | 300m | |

| 10GBASE-LR | 10 Gigabit? | Single-mode fibre with Laser | 10 Gbps | 10km | |

| 10GBASE-E | 10 Gigabit | Single-mode fibre with Laser | 10 Gbps | 30km |

Cabling Guide for Console and AUX Ports

https://www.cisco.com/c/en/us/support/docs/routers/7000-series-routers/12223-14.html

Can a huge coiled LAN cable have some trouble for transmitting a signal?

https://superuser.com/questions/475934/can-a-huge-coiled-lan-cable-have-some-trouble-for-transmitting-a-signal

Protective boots around the ethernet cable plug accidentally resetting some Cisco devices!

https://www.cisco.com/c/en/us/support/docs/field-notices/636/fn63697.html

CCNA Exam

New CCNA, New exam goes live on February 24, 2020, Exam code 200-301 CCNA

https://www.cisco.com/c/en/us/training-events/training-certifications/certifications/associate/ccna.html

200-301 CCNA Exam topics

https://learningnetwork.cisco.com/community/certifications/ccna-cert/ccna-exam/exam-topics

200-301 CCNA Exam topics in PDF

https://www.cisco.com/c/dam/en_us/training-events/le31/le46/cln/marketing/exam-topics/200-301-CCNA.pdf

200-301 information

https://study-ccna.com/new-ccna-exam-200-301/

Old CCNA exams for CCENT, ICND1 (100-105), ICND2 (200-105)

ICND - Interconnecting Cisco Network Devices

https://www.cisco.com/c/en/us/training-events/training-certifications/exams/current-list/100-105-icnd1.html

https://www.cisco.com/c/en/us/training-events/training-certifications/exams/current-list/200-105-icnd2.html

CCNA Routing and Switching (200-125)

https://www.cisco.com/c/en/us/training-events/training-certifications/certifications/associate/ccna-routing-switching.html

old 200-125 CCNA Routing and Switching practice questions

https://searchnetworking.techtarget.com/quiz/Cisco-CCNA-exam-Are-you-ready-Take-this-10-question-quiz-to-find-out

Ten Reasons You Should Get Cisco CCNA Routing and Switching Certified

https://learningnetwork.cisco.com/docs/DOC-30659

https://www.techrepublic.com/article/4-android-apps-to-help-you-ace-ccna-and-other-networking-exams/

https://learningnetwork.cisco.com/blogs/certifications-and-labs-delivery/2016/10/10/demystifying-the-cisco-score-report

https://www.cbtnuggets.com/blog/certifications/cisco/is-the-ccna-rs-difficult

“I got CCNA certified almost 15 years ago. And even though I am not a networking guy, I am still benefiting from what I have learned from the “old” CCNA R&S. Having a good understanding of networking, routing protocols, vLANs, WAN technologies, etc will really help you in any IT career path.”

https://lazyadmin.nl/it/ccna-200-301/

5 Best Network Simulators for Cisco Exams: CCNA, CCNP, CCIE

https://www.cbtnuggets.com/blog/career/career-progression/5-best-network-simulators-for-cisco-exams-ccna-ccnp-and-ccie

CompTIA Network+

https://www.comptia.org/certifications/network

What is a Full Stack Network Engineer? https://help.nexgent.com/en/articles/587234-what-is-a-full-stack-network-engineer

Paul Browning HowToNetwork.com

https://www.howtonetwork.com/courses/cisco/cisco-ccna-implementing-and-administering-cisco-solutions/

DISCOUNT!!

https://learningnetwork.cisco.com/s/cisco-certifications-and-training-offers

Mock/Sample Exams

Books

Cisco CCNA Certification: Exam 200-301 2 Volume Set Paperback – 19 Apr 2020 by Todd Lammle (Author)

https://www.amazon.co.uk/dp/1119677610/

https://blackwells.co.uk/bookshop/product/Understanding-Cisco-Networking-Technologies-Volume-1-by-Todd-Lammle-author/9781119659020

Companion site http://www.ciscopress.com/title/9780135792735

from Routing TCP/IP, Volume II: CCIE Professional Development, 2nd Edition, author Jeff Doyle covers the basic operation of BGP,

http://www.ciscopress.com/articles/article.asp?p=2738462

https://computingforgeeks.com/best-ccna-rs-200-125-certification-preparation-books-2019/?amp

Cisco dCloud

Interesting articles

Fake Cisco switch (WS-2960X-48TS-L) analysis by F-Secure

https://labs.f-secure.com/publications/the-fake-cisco/